This is a long post. There’s a lot of stuff in here. There’s no quick and dirty how-to. It’s a diagnostic record. Hope it helps.

This morning I saw log entries I’ve never noticed before. They seem to have started 9 hours ago. First, this email arrived.

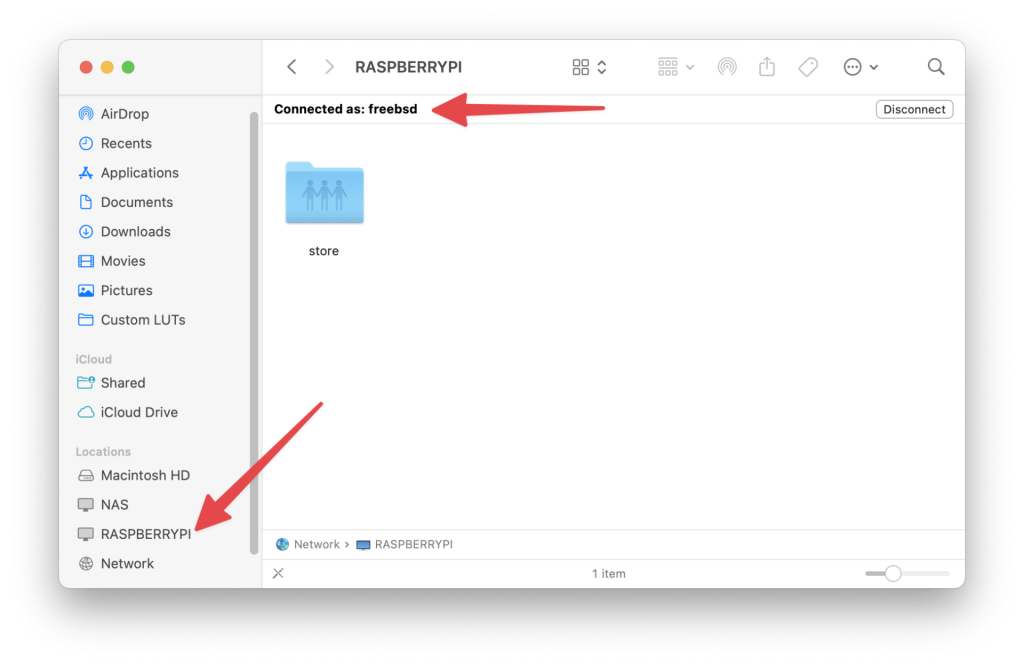

cliff2 is one one two hosts behind cliff:

[7:25 pro05 dvl ~] % host cliff

cliff.int.unixathome.org has address 10.55.0.44

cliff.int.unixathome.org has address 10.55.0.14

In this post:

- FreeBSD 15.0

- Jan 18 18:34:48 cliff2 pkg[70008]: postfix upgraded: 3.10.3,1 -> 3.10.6,1

- the host is r730-01 (that post was created before this host was upodated to FreeBSD 15.0)

Of the 16 emails received, they were all about cliff2, never cliff1.

Date: Wed, 18 Feb 2026 12:22:30 +0000 (UTC)

From: Mail Delivery System <MAILER-DAEMON@cliff2.int.unixathome.org>

To: Postmaster <postmaster@cliff2.int.unixathome.org>

Subject: Postfix SMTP server: errors from webserver.int.unixathome.org[10.55.0.3]

Message-Id: <20260218122230.767072C399@cliff2.int.unixathome.org>

Transcript of session follows.

Out: 220 cliff2.int.unixathome.org ESMTP Postfix

In: EHLO webserver.int.unixathome.org

Out: 250-cliff2.int.unixathome.org

Out: 250-PIPELINING

Out: 250-SIZE 10485760000

Out: 250-ETRN

Out: 250-STARTTLS

Out: 250-ENHANCEDSTATUSCODES

Out: 250-8BITMIME

Out: 250-DSN

Out: 250-SMTPUTF8

Out: 250 CHUNKING

In: STARTTLS

Out: 220 2.0.0 Ready to start TLS

In: EHLO webserver.int.unixathome.org

Out: 250-cliff2.int.unixathome.org

Out: 250-PIPELINING

Out: 250-SIZE 10485760000

Out: 250-ETRN

Out: 250-ENHANCEDSTATUSCODES

Out: 250-8BITMIME

Out: 250-DSN

Out: 250-SMTPUTF8

Out: 250 CHUNKING

In: MAIL FROM:<nagios@webserver.int.unixathome.org>

Out: 452 4.3.1 Insufficient system storage

Session aborted, reason: lost connection

For other details, see the local mail logfile

There were also a few email from MAIL FROM: but most were like the above.

Log entries

The log entries for the sending host are:

Feb 18 12:22:30 webserver dma[15199]: new mail from user=nagios uid=181 envelope_from=<nagios@webserver.int.unixathome.org>

Feb 18 12:22:30 webserver dma[15199]: mail to=<dan@langille.org> queued as 15199.3f8617a420a0

Feb 18 12:22:30 webserver dma[15199.3f8617a420a0][98813]: <dan@langille.org> trying delivery

Feb 18 12:22:30 webserver dma[15199.3f8617a420a0][98813]: using smarthost (cliff.int.unixathome.org:25)

Feb 18 12:22:30 webserver dma[15199.3f8617a420a0][98813]: trying remote delivery to cliff.int.unixathome.org [10.55.0.44] pref 0

Feb 18 12:22:30 webserver dma[15199.3f8617a420a0][98813]: remote delivery deferred: cliff.int.unixathome.org [10.55.0.44] failed after MAIL FROM: 452 4.3.1 Insufficient system storage

Feb 18 12:22:30 webserver dma[15199.3f8617a420a0][98813]: trying remote delivery to cliff.int.unixathome.org [10.55.0.14] pref 0

Feb 18 12:22:30 webserver dma[15199.3f8617a420a0][98813]: <dan@langille.org> delivery successful

On the receiving host (cliff2), I found:

Feb 18 12:22:02 cliff2 postfix/smtpd[95569]: disconnect from webserver.int.unixathome.org[10.55.0.3] helo=1 quit=1 commands=2

Feb 18 12:22:30 cliff2 postfix/smtpd[95569]: connect from webserver.int.unixathome.org[10.55.0.3]

Feb 18 12:22:30 cliff2 postfix/smtpd[95569]: Anonymous TLS connection established from webserver.int.unixathome.org[10.55.0.3]: TLSv1.3 with cipher TLS_AES_256_GCM_SHA384 (256/256 bits) key-exchange x25519 server-signature RSA-PSS (2048 bits) server-digest SHA256

Feb 18 12:22:30 cliff2 postfix/smtpd[95569]: NOQUEUE: reject: MAIL from webserver.int.unixathome.org[10.55.0.3]: 452 4.3.1 Insufficient system storage; proto=ESMTP helo=<webserver.int.unixathome.org>

Feb 18 12:22:30 cliff2 postfix/smtpd[95569]: warning: not enough free space in mail queue: 15472168960 bytes < 1.5*message size limit

Feb 18 12:22:30 cliff2 postfix/smtpd[95569]: NOQUEUE: lost connection after MAIL from webserver.int.unixathome.org[10.55.0.3]

Feb 18 12:22:30 cliff2 postfix/cleanup[98873]: 767072C399: message-id=<20260218122230.767072C399@cliff2.int.unixathome.org>

Feb 18 12:22:30 cliff2 postfix/smtpd[95569]: disconnect from webserver.int.unixathome.org[10.55.0.3] ehlo=2 starttls=1 mail=0/1 commands=3/4

Feb 18 12:22:30 cliff2 postfix/qmgr[7445]: 767072C399: from=<double-bounce@cliff2.int.unixathome.org>, size=1277, nrcpt=1 (queue active)

Feb 18 12:22:30 cliff2 postfix/cleanup[98873]: 79B732C911: message-id=<20260218122230.767072C399@cliff2.int.unixathome.org>

Feb 18 12:22:30 cliff2 postfix/local[98877]: 767072C399: to=<postmaster@cliff2.int.unixathome.org>, orig_to=<postmaster>, relay=local, delay=0.02, delays=0.01/0.01/0/0, dsn=2.0.0, status=sent (forwarded as 79B732C911)

Feb 18 12:22:30 cliff2 postfix/qmgr[7445]: 79B732C911: from=<double-bounce@cliff2.int.unixathome.org>, size=1434, nrcpt=1 (queue active)

Feb 18 12:22:30 cliff2 postfix/qmgr[7445]: 767072C399: removed

Feb 18 12:22:30 cliff2 postfix/smtp[98880]: Untrusted TLS connection established to smtp.fastmail.com[103.168.172.60]:587: TLSv1.3 with cipher TLS_AES_256_GCM_SHA384 (256/256 bits) key-exchange x25519

Feb 18 12:22:30 cliff2 postfix/smtp[98880]: 79B732C911: to=<dan@langille.org>, orig_to=<postmaster>, relay=smtp.fastmail.com[103.168.172.60]:587, delay=0.36, delays=0/0.01/0.11/0.23, dsn=2.0.0, status=sent (250 2.0.0 Ok: queued as CF51A800C2 ti_phl-compute-08_3001043_1771417350_11 via phl-compute-08)

Feb 18 12:22:30 cliff2 postfix/qmgr[7445]: 79B732C911: removed

The mail queues on both hosts are empty.

Is it really a space issue?

No, it’s a mail configuration issue. See line 5, highlighted above.

That message repeats:

[12:35 cliff2 dvl ~] % sudo grep 'warning: not enough free space in mail queue' /var/log/maillog

Feb 18 04:46:54 cliff2 postfix/smtpd[11796]: warning: not enough free space in mail queue: 15723220992 bytes < 1.5*message size limit

Feb 18 04:47:35 cliff2 postfix/smtpd[11796]: warning: not enough free space in mail queue: 15720923136 bytes < 1.5*message size limit

Feb 18 05:47:10 cliff2 postfix/smtpd[84061]: warning: not enough free space in mail queue: 15652507648 bytes < 1.5*message size limit

Feb 18 06:31:40 cliff2 postfix/smtpd[3824]: warning: not enough free space in mail queue: 15704023040 bytes < 1.5*message size limit

Feb 18 06:31:50 cliff2 postfix/smtpd[3824]: warning: not enough free space in mail queue: 15688192000 bytes < 1.5*message size limit

Feb 18 06:32:20 cliff2 postfix/smtpd[3824]: warning: not enough free space in mail queue: 15684222976 bytes < 1.5*message size limit

Feb 18 07:35:50 cliff2 postfix/smtpd[90728]: warning: not enough free space in mail queue: 15561146368 bytes < 1.5*message size limit

Feb 18 07:40:51 cliff2 postfix/smtpd[10376]: warning: not enough free space in mail queue: 15549181952 bytes < 1.5*message size limit

Feb 18 08:27:51 cliff2 postfix/smtpd[32105]: warning: not enough free space in mail queue: 15599861760 bytes < 1.5*message size limit

Feb 18 08:27:51 cliff2 postfix/smtpd[38446]: warning: not enough free space in mail queue: 15599861760 bytes < 1.5*message size limit

Feb 18 08:31:10 cliff2 postfix/smtpd[49465]: warning: not enough free space in mail queue: 15538733056 bytes < 1.5*message size limit

Feb 18 08:31:41 cliff2 postfix/smtpd[49465]: warning: not enough free space in mail queue: 15529779200 bytes < 1.5*message size limit

Feb 18 10:22:30 cliff2 postfix/smtpd[2402]: warning: not enough free space in mail queue: 15584747520 bytes < 1.5*message size limit

Feb 18 11:22:30 cliff2 postfix/smtpd[6039]: warning: not enough free space in mail queue: 15494496256 bytes < 1.5*message size limit

Feb 18 12:02:02 cliff2 postfix/smtpd[6761]: warning: not enough free space in mail queue: 15571550208 bytes < 1.5*message size limit

Feb 18 12:22:30 cliff2 postfix/smtpd[95569]: warning: not enough free space in mail queue: 15472168960 bytes < 1.5*message size limit

[12:35 cliff2 dvl ~] %

It seems someone wants to send a bigger message. 15GB email? I don’t think so. You’re not sending that. At first, I thought, I’ll just boost the limit. In this case, no. Don’t send me that email.

I suspect the email is from Nagios. I suspect the email is generated by a notification and for some reason, it has exploded.

Nagios restart

I’ve discovered that Nagios restart reproduces the problem.

Let’s deliver that email locally by commenting out this line from /etc/mail/aliases:

#root: dan@langille.org

… and restarting Nagios did not generate the issue.

What is also interesting, I’m seeing similar reports such as Postfix SMTP server: errors from unifi01.int.unixathome.org[10.55.0.131] – yet the mail log on unifi01 is empty. Logging is working:

[16:38 unifi01 dvl ~] % sudo cat /var/log/maillog

Feb 18 00:00:00 unifi01 newsyslog[98880]: logfile turned over

Feb 18 16:38:45 unifi01 dma[4d136][84888]: new mail from user=dvl uid=1002 envelope_from=<dvl@unifi01.int.unixathome.org>

Feb 18 16:38:45 unifi01 dma[4d136][84888]: mail to=<dan@langille.org> queued as 4d136.372c11442000

Feb 18 16:38:45 unifi01 dma[4d136.372c11442000][84890]: <dan@langille.org> trying delivery

Feb 18 16:38:45 unifi01 dma[4d136.372c11442000][84890]: using smarthost (cliff.int.unixathome.org:25)

Feb 18 16:38:45 unifi01 dma[4d136.372c11442000][84890]: trying remote delivery to cliff.int.unixathome.org [10.55.0.14] pref 0

Feb 18 16:38:45 unifi01 dma[4d136.372c11442000][84890]: <dan@langille.org> delivery successful

Yet, cliff2 says mail came from there:

Feb 18 16:23:41 cliff2 postfix/smtpd[28059]: connect from unifi01.int.unixathome.org[10.55.0.131]

Feb 18 16:23:41 cliff2 postfix/smtpd[28059]: Anonymous TLS connection established from unifi01.int.unixathome.org[10.55.0.131]: TLSv1.3 with cipher TLS_AES_256_GCM_SHA384 (25

6/256 bits) key-exchange x25519 server-signature RSA-PSS (2048 bits) server-digest SHA256

Feb 18 16:23:41 cliff2 postfix/smtpd[28059]: NOQUEUE: reject: MAIL from unifi01.int.unixathome.org[10.55.0.131]: 452 4.3.1 Insufficient system storage; proto=ESMTP helo=<unif

i01.int.unixathome.org>

Feb 18 16:23:41 cliff2 postfix/smtpd[28059]: warning: not enough free space in mail queue: 15225958400 bytes < 1.5*message size limit

Feb 18 16:23:41 cliff2 postfix/cleanup[28068]: EF82B2C5A0: message-id=<20260218162341.EF82B2C5A0@cliff2.int.unixathome.org>

Feb 18 16:23:41 cliff2 postfix/smtpd[28059]: disconnect from unifi01.int.unixathome.org[10.55.0.131] ehlo=2 starttls=1 mail=0/1 rset=1 quit=1 commands=5/6

Feb 18 16:23:41 cliff2 postfix/qmgr[7445]: EF82B2C5A0: from=<double-bounce@cliff2.int.unixathome.org>, size=1278, nrcpt=1 (queue active)

Feb 18 16:23:41 cliff2 postfix/cleanup[28068]: F2A122BB68: message-id=<20260218162341.EF82B2C5A0@cliff2.int.unixathome.org>

Feb 18 16:23:41 cliff2 postfix/local[28069]: EF82B2C5A0: to=<postmaster@cliff2.int.unixathome.org>, orig_to=<postmaster>, relay=local, delay=0.02, delays=0.01/0.01/0/0, dsn=2.0.0, status=sent (forwarded as F2A122BB68)

Feb 18 16:23:41 cliff2 postfix/qmgr[7445]: F2A122BB68: from=<double-bounce@cliff2.int.unixathome.org>, size=1435, nrcpt=1 (queue active)

Feb 18 16:23:41 cliff2 postfix/qmgr[7445]: EF82B2C5A0: removed

Feb 18 16:23:42 cliff2 postfix/smtp[28070]: Untrusted TLS connection established to smtp.fastmail.com[103.168.172.60]:587: TLSv1.3 with cipher TLS_AES_256_GCM_SHA384 (256/256 bits) key-exchange x25519 server-signature RSA-PSS (2048 bits) server-digest SHA256

Feb 18 16:23:42 cliff2 postfix/smtp[28070]: F2A122BB68: to=<dan@langille.org>, orig_to=<postmaster>, relay=smtp.fastmail.com[103.168.172.60]:587, delay=0.65, delays=0/0.01/0.21/0.42, dsn=2.0.0, status=sent (250 2.0.0 Ok: queued as 8FF3E80104 ti_phl-compute-08_3096974_1771431822_1 via phl-compute-08)

Feb 18 16:23:42 cliff2 postfix/qmgr[7445]: F2A122BB68: removed

Now I’m wonderng if this is a Postfix configuration issue?

I did a postconf -n difference between the two hosts:

[12:00 pro05 dvl ~/tmp] % diff -ruN cliff1 cliff2

--- cliff1 2026-02-18 12:00:00

+++ cliff2 2026-02-18 11:59:20

@@ -1,4 +1,4 @@

-[16:59 cliff1 dvl ~] % postconf -n

+[16:58 cliff2 dvl ~] % postconf -n

alias_maps = hash:/etc/mail/aliases

command_directory = /usr/local/sbin

compatibility_level = 3.6

@@ -17,7 +17,7 @@

message_size_limit = 10485760000

meta_directory = /usr/local/libexec/postfix

mydestination = $myhostname, localhost.$mydomain, localhost

-myhostname = cliff1.int.unixathome.org

+myhostname = cliff2.int.unixathome.org

mynetworks_style = class

newaliases_path = /usr/local/bin/newaliases

queue_directory = /var/spool/postfix

[12:00 pro05 dvl ~/tmp] %

Nothing.

Another source

Feb 18 18:02:02 dns-hidden-master dma[3ad8b][35058]: new mail from user=logcheck uid=915 envelope_from=<logcheck@dns-hidden-master.int.unixathome.org>

Feb 18 18:02:02 dns-hidden-master dma[3ad8b][35058]: mail to=<dan@langille.org> queued as 3ad8b.51b887042000

Feb 18 18:02:02 dns-hidden-master dma[3ad8b.51b887042000][35110]: <dan@langille.org> trying delivery

Feb 18 18:02:02 dns-hidden-master dma[3ad8b.51b887042000][35110]: using smarthost (cliff.int.unixathome.org:25)

Feb 18 18:02:02 dns-hidden-master dma[3ad8b.51b887042000][35110]: trying remote delivery to cliff.int.unixathome.org [10.55.0.44] pref 0

Feb 18 18:02:02 dns-hidden-master dma[3ad8b.51b887042000][35110]: remote delivery deferred: cliff.int.unixathome.org [10.55.0.44] failed after MAIL FROM: 452 4.3.1 Insufficient system storage

Feb 18 18:02:02 dns-hidden-master dma[3ad8b.51b887042000][35110]: trying remote delivery to cliff.int.unixathome.org [10.55.0.14] pref 0

Then I tried manually from that same source:

[18:03 dns-hidden-master dvl ~] % echo testing | mail dan@langille.org

The log entries:

Feb 18 18:03:51 dns-hidden-master dma[39652]: new mail from user=dvl uid=1002 envelope_from=<dvl@dns-hidden-master.int.unixathome.org>

Feb 18 18:03:51 dns-hidden-master dma[39652]: mail to=<dan@langille.org> queued as 39652.3ee88ac42000

Feb 18 18:03:51 dns-hidden-master dma[39652.3ee88ac42000][45462]: <dan@langille.org> trying delivery

Feb 18 18:03:51 dns-hidden-master dma[39652.3ee88ac42000][45462]: using smarthost (cliff.int.unixathome.org:25)

Feb 18 18:03:51 dns-hidden-master dma[39652.3ee88ac42000][45462]: trying remote delivery to cliff.int.unixathome.org [10.55.0.44] pref 0

Feb 18 18:03:51 dns-hidden-master dma[39652.3ee88ac42000][45462]: remote delivery deferred: cliff.int.unixathome.org [10.55.0.44] failed after MAIL FROM: 452 4.3.1 Insufficient system storage

Feb 18 18:03:51 dns-hidden-master dma[39652.3ee88ac42000][45462]: trying remote delivery to cliff.int.unixathome.org [10.55.0.14] pref 0

Feb 18 18:03:51 dns-hidden-master dma[39652.3ee88ac42000][45462]: <dan@langille.org> delivery successful

So, a very small message is triggering the event. I tried again. It is repeatable.

Simple telnet

telnet is a time honored tool. The following highlighted lines are the ones I typed.

[18:24 mydev dvl ~] % telnet cliff2 25

Trying 10.55.0.44...

Connected to cliff2.int.unixathome.org.

Escape character is '^]'.

220 cliff2.int.unixathome.org ESMTP Postfix

EHLO mydev

250-cliff2.int.unixathome.org

250-PIPELINING

250-SIZE 10485760000

250-ETRN

250-STARTTLS

250-ENHANCEDSTATUSCODES

250-8BITMIME

250-DSN

250-SMTPUTF8

250 CHUNKING

MAIL FROM:dan@langille.org

452 4.3.1 Insufficient system storage

So, that’s the same as the stuff at the top of this post, apart from STARTTLS which I was not prepared to from within telnet.

I tried this with cliff1 – flawless. All good there.

Restarting postfix, then the jail

I’m sure it’s not space:

[16:54 r730-01 dvl ~] % zpool list

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data01 5.81T 6.36G 5.81T - - 2% 0% 1.00x ONLINE -

data02 928G 705G 223G - - 67% 75% 1.00x ONLINE -

data03 7.25T 1.30T 5.95T - - 49% 17% 1.00x ONLINE -

data04 29.1T 6.11T 23.0T - - 0% 21% 1.00x ONLINE -

zroot 107G 47.9G 59.1G - - 54% 44% 1.00x ONLINE -

[18:30 r730-01 dvl ~] % zfs list | grep cliff2

data02/jails/cliff2 4.00G 13.6G 2.46G /jails/cliff2

It’s all just one filesystem in there:

[18:36 cliff2 dvl ~] % zfs list

no datasets available

[18:36 cliff2 dvl ~] % df -h

Filesystem Size Used Avail Capacity Mounted on

data02/jails/cliff2 16G 2.5G 14G 15% /

Let’s try:

[18:30 cliff2 dvl ~] % sudo service postfix restart

postfix/postfix-script: stopping the Postfix mail system

postfix/postfix-script: starting the Postfix mail system

[18:30 cliff2 dvl ~] %

That didn’t help.

I tried a jail restart, did not help:

[18:31 r730-01 dvl ~] % sudo service jail restart cliff2

Stopping jails: cliff2.

Starting jails: cliff2.

Let’s try snapshots:

[18:37 r730-01 dvl ~] % zfs list -r -t snapshot data02/jails/cliff2 | grep -v @autosnap

NAME USED AVAIL REFER MOUNTPOINT

data02/jails/cliff2@mkjail-202509051453 725M - 2.24G -

data02/jails/cliff2@mkjail-202602101242 716M - 2.38G -

Let’s destroy those two:

[18:37 r730-01 dvl ~] % sudo zfs destroy data02/jails/cliff2@mkjail-202509051453

[18:37 r730-01 dvl ~] % sudo zfs destroy data02/jails/cliff2@mkjail-202602101242

Umm, that seems to have fixed it:

[18:39 mydev dvl ~] % telnet cliff2 25

Trying 10.55.0.44...

Connected to cliff2.int.unixathome.org.

Escape character is '^]'.

220 cliff2.int.unixathome.org ESMTP Postfix

EHLO mydev

250-cliff2.int.unixathome.org

250-PIPELINING

250-SIZE 10485760000

250-ETRN

250-STARTTLS

250-ENHANCEDSTATUSCODES

250-8BITMIME

250-DSN

250-SMTPUTF8

250 CHUNKING

MAIL FROM:dan@langille.org

250 2.1.0 Ok

WTF?

[18:39 r730-01 dvl ~] % zfs list data02/jails/cliff2

NAME USED AVAIL REFER MOUNTPOINT

data02/jails/cliff2 2.53G 15.0G 2.46G /jails/cliff2

[18:39 r730-01 dvl ~] % zpool list data02

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data02 928G 704G 224G - - 66% 75% 1.00x ONLINE -

[18:40 r730-01 dvl ~] %

No quota or reservation

The pool had 223G free at the start of this.

Let’s look around…

[18:40 r730-01 dvl ~] % zfs get all data02/jails/cliff2 | grep quota

data02/jails/cliff2 quota none default

data02/jails/cliff2 refquota none default

data02/jails/cliff2 defaultuserquota 0 -

data02/jails/cliff2 defaultgroupquota 0 -

data02/jails/cliff2 defaultprojectquota 0 -

data02/jails/cliff2 defaultuserobjquota 0 -

data02/jails/cliff2 defaultgroupobjquota 0 -

data02/jails/cliff2 defaultprojectobjquota 0 -

[18:41 r730-01 dvl ~] % zfs get all data02/jails | grep quota

data02/jails quota none default

data02/jails refquota none default

data02/jails defaultuserquota 0 -

data02/jails defaultgroupquota 0 -

data02/jails defaultprojectquota 0 -

data02/jails defaultuserobjquota 0 -

data02/jails defaultgroupobjquota 0 -

data02/jails defaultprojectobjquota 0 -

[18:41 r730-01 dvl ~] % zfs get all data02 | grep quota

data02 quota none default

data02 refquota none default

data02 defaultuserquota 0 -

data02 defaultgroupquota 0 -

data02 defaultprojectquota 0 -

data02 defaultuserobjquota 0 -

data02 defaultgroupobjquota 0 -

data02 defaultprojectobjquota 0 -

[18:41 r730-01 dvl ~] % zfs get all data02 | grep reserve

[18:41 r730-01 dvl ~] % zfs get all data02 | grep res

data02 compressratio 1.79x -

data02 reservation none default

data02 compression zstd received

data02 aclinherit restricted default

data02 sharesmb off default

data02 refreservation none default

data02 usedbyrefreservation 0B -

data02 refcompressratio 1.00x -

[18:41 r730-01 dvl ~] % zfs get all data02/jails/cliff2 | grep reservation

data02/jails/cliff2 reservation none default

data02/jails/cliff2 refreservation none default

data02/jails/cliff2 usedbyrefreservation 0B -

[18:42 r730-01 dvl ~] % zfs get all data02/jails | grep reservation

data02/jails reservation none default

data02/jails refreservation none default

data02/jails usedbyrefreservation 0B -

[18:42 r730-01 dvl ~] % zfs get all data02 | grep reservation

data02 reservation none default

data02 refreservation none default

data02 usedbyrefreservation 0B -

[18:42 r730-01 dvl ~] %

So, what was blocking this?

zpool status

As an afterthought:

[18:42 r730-01 dvl ~] % zpool status

pool: data01

state: ONLINE

status: Some supported and requested features are not enabled on the pool.

The pool can still be used, but some features are unavailable.

action: Enable all features using 'zpool upgrade'. Once this is done,

the pool may no longer be accessible by software that does not support

the features. See zpool-features(7) for details.

scan: scrub repaired 0B in 00:00:06 with 0 errors on Thu Feb 12 03:53:00 2026

config:

NAME STATE READ WRITE CKSUM

data01 ONLINE 0 0 0

raidz2-0 ONLINE 0 0 0

gpt/Y7P0A022TEVE ONLINE 0 0 0

gpt/Y7P0A02ATEVE ONLINE 0 0 0

gpt/Y7P0A02DTEVE ONLINE 0 0 0

gpt/Y7P0A02GTEVE ONLINE 0 0 0

gpt/Y7P0A02LTEVE ONLINE 0 0 0

gpt/Y7P0A02MTEVE ONLINE 0 0 0

gpt/Y7P0A02QTEVE ONLINE 0 0 0

gpt/Y7P0A033TEVE ONLINE 0 0 0

errors: No known data errors

pool: data02

state: ONLINE

status: Some supported and requested features are not enabled on the pool.

The pool can still be used, but some features are unavailable.

action: Enable all features using 'zpool upgrade'. Once this is done,

the pool may no longer be accessible by software that does not support

the features. See zpool-features(7) for details.

scan: scrub repaired 0B in 00:08:44 with 0 errors on Wed Feb 18 04:03:38 2026

config:

NAME STATE READ WRITE CKSUM

data02 ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

gpt/S6WSNJ0T208743F ONLINE 0 0 0

gpt/S6WSNJ0T207774T ONLINE 0 0 0

errors: No known data errors

pool: data03

state: ONLINE

status: Some supported and requested features are not enabled on the pool.

The pool can still be used, but some features are unavailable.

action: Enable all features using 'zpool upgrade'. Once this is done,

the pool may no longer be accessible by software that does not support

the features. See zpool-features(7) for details.

scan: scrub repaired 0B in 00:49:32 with 0 errors on Thu Feb 12 04:42:48 2026

config:

NAME STATE READ WRITE CKSUM

data03 ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

gpt/WD_22492H800867 ONLINE 0 0 0

gpt/WD_230151801284 ONLINE 0 0 0

mirror-1 ONLINE 0 0 0

gpt/WD_230151801478 ONLINE 0 0 0

gpt/WD_230151800473 ONLINE 0 0 0

errors: No known data errors

pool: data04

state: ONLINE

status: Some supported and requested features are not enabled on the pool.

The pool can still be used, but some features are unavailable.

action: Enable all features using 'zpool upgrade'. Once this is done,

the pool may no longer be accessible by software that does not support

the features. See zpool-features(7) for details.

scan: scrub repaired 0B in 00:48:58 with 0 errors on Wed Feb 18 04:44:04 2026

config:

NAME STATE READ WRITE CKSUM

data04 ONLINE 0 0 0

raidz2-0 ONLINE 0 0 0

gpt/S7KGNU0Y722875X ONLINE 0 0 0

gpt/S7KGNU0Y915666E ONLINE 0 0 0

gpt/S7KGNU0Y912937J ONLINE 0 0 0

gpt/S7KGNU0Y912955D ONLINE 0 0 0

gpt/S7U8NJ0Y716854P ONLINE 0 0 0

gpt/S7U8NJ0Y716801F ONLINE 0 0 0

gpt/S757NS0Y700758M ONLINE 0 0 0

gpt/S757NS0Y700760R ONLINE 0 0 0

errors: No known data errors

pool: zroot

state: ONLINE

status: Some supported and requested features are not enabled on the pool.

The pool can still be used, but some features are unavailable.

action: Enable all features using 'zpool upgrade'. Once this is done,

the pool may no longer be accessible by software that does not support

the features. See zpool-features(7) for details.

scan: scrub repaired 0B in 00:02:22 with 0 errors on Tue Feb 17 04:03:50 2026

config:

NAME STATE READ WRITE CKSUM

zroot ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

gpt/zfs0_20170718AA0000185556 ONLINE 0 0 0

gpt/zfs1_20170719AA1178164201 ONLINE 0 0 0

errors: No known data errors

Cause found

The cause has been found. It was another dataset, with a reservation. It was discussed during the ZFS production users call. They asked me a few questions, I tried a few times, etc.

First, write some data.

[22:25 cliff2 dvl ~] % sudo dd if=/dev/random of=/tmp/delete=me bs=1M count=20000 status=progress

dd: /tmp/delete=me: No space left on devicerred 76.001s, 220 MB/s

16172+0 records in

16171+1 records out

16956915712 bytes transferred in 79.099811 secs (214373656 bytes/sec)

OK, it filled up. Next, we looked at this.

[22:31 r730-01 dvl ~] % zpool status data02

pool: data02

state: ONLINE

status: Some supported and requested features are not enabled on the pool.

The pool can still be used, but some features are unavailable.

action: Enable all features using 'zpool upgrade'. Once this is done,

the pool may no longer be accessible by software that does not support

the features. See zpool-features(7) for details.

scan: scrub repaired 0B in 00:08:44 with 0 errors on Wed Feb 18 04:03:38 2026

config:

NAME STATE READ WRITE CKSUM

data02 ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

gpt/S6WSNJ0T208743F ONLINE 0 0 0

gpt/S6WSNJ0T207774T ONLINE 0 0 0

errors: No known data errors

[22:31 r730-01 dvl ~] % zfs list data02/jails

NAME USED AVAIL REFER MOUNTPOINT

data02/jails 363G 0B 9.54G /jails

[22:32 r730-01 dvl ~] % zfs list -r data02

NAME USED AVAIL REFER MOUNTPOINT

data02 900G 0B 96K none

data02/freshports 294G 0B 88K none

data02/freshports/dev-ingress01 228G 0B 88K none

data02/freshports/dev-ingress01/dvl-src 197G 0B 197G /jails/dev-ingress01/usr/home/dvl/src

data02/freshports/dev-ingress01/freshports 22.5G 0B 2.06G /jails/dev-ingress01/var/db/freshports

data02/freshports/dev-ingress01/freshports/cache 2.11M 0B 132K /jails/dev-ingress01/var/db/freshports/cache

data02/freshports/dev-ingress01/freshports/cache/html 1.88M 0B 1.88M /jails/dev-ingress01/var/db/freshports/cache/html

data02/freshports/dev-ingress01/freshports/cache/spooling 104K 0B 104K /jails/dev-ingress01/var/db/freshports/cache/spooling

data02/freshports/dev-ingress01/freshports/message-queues 20.5G 0B 11.7M /jails/dev-ingress01/var/db/freshports/message-queues

data02/freshports/dev-ingress01/freshports/message-queues/archive 20.4G 0B 11.3G /jails/dev-ingress01/var/db/freshports/message-queues/archive

data02/freshports/dev-ingress01/ingress 5.46G 0B 132K /jails/dev-ingress01/var/db/ingress

data02/freshports/dev-ingress01/ingress/latest_commits 528K 0B 108K /jails/dev-ingress01/var/db/ingress/latest_commits

data02/freshports/dev-ingress01/ingress/message-queues 1.44M 0B 628K /jails/dev-ingress01/var/db/ingress/message-queues

data02/freshports/dev-ingress01/ingress/repos 5.45G 0B 120K /jails/dev-ingress01/var/db/ingress/repos

data02/freshports/dev-ingress01/ingress/repos/doc 525M 0B 522M /jails/dev-ingress01/var/db/ingress/repos/doc

data02/freshports/dev-ingress01/ingress/repos/ports 2.28G 0B 2.27G /jails/dev-ingress01/var/db/ingress/repos/ports

data02/freshports/dev-ingress01/ingress/repos/src 2.66G 0B 2.65G /jails/dev-ingress01/var/db/ingress/repos/src

data02/freshports/dev-ingress01/jails 3.00G 0B 104K /jails/dev-ingress01/jails

data02/freshports/dev-ingress01/jails/freshports 3.00G 0B 405M /jails/dev-ingress01/jails/freshports

data02/freshports/dev-ingress01/jails/freshports/ports 2.61G 0B 2.61G /jails/dev-ingress01/jails/freshports/usr/ports

data02/freshports/dev-ingress01/modules 4.38M 0B 4.38M /jails/dev-ingress01/usr/local/lib/perl5/site_perl/FreshPorts

data02/freshports/dev-ingress01/scripts 3.30M 0B 3.30M /jails/dev-ingress01/usr/local/libexec/freshports

data02/freshports/dev-nginx01 54.6M 0B 96K none

data02/freshports/dev-nginx01/www 54.5M 0B 96K /jails/dev-nginx01/usr/local/www

data02/freshports/dev-nginx01/www/freshports 51.7M 0B 51.7M /jails/dev-nginx01/usr/local/www/freshports

data02/freshports/dev-nginx01/www/freshsource 2.71M 0B 2.71M /jails/dev-nginx01/usr/local/www/freshsource

data02/freshports/dvl-ingress01 18.9G 0B 96K none

data02/freshports/dvl-ingress01/dvl-src 80.3M 0B 80.3M /jails/dvl-ingress01/usr/home/dvl/src

data02/freshports/dvl-ingress01/freshports 4.38G 0B 96K /jails/dvl-ingress01/var/db/freshports

data02/freshports/dvl-ingress01/freshports/cache 2.31M 0B 96K /jails/dvl-ingress01/var/db/freshports/cache

data02/freshports/dvl-ingress01/freshports/cache/html 2.01M 0B 1.93M /jails/dvl-ingress01/var/db/freshports/cache/html

data02/freshports/dvl-ingress01/freshports/cache/spooling 208K 0B 208K /jails/dvl-ingress01/var/db/freshports/cache/spooling

data02/freshports/dvl-ingress01/freshports/message-queues 4.38G 0B 16.8M /jails/dvl-ingress01/var/db/freshports/message-queues

data02/freshports/dvl-ingress01/freshports/message-queues/archive 4.37G 0B 4.37G /jails/dvl-ingress01/var/db/freshports/message-queues/archive

data02/freshports/dvl-ingress01/ingress 8.60G 0B 140K /jails/dvl-ingress01/var/db/ingress

data02/freshports/dvl-ingress01/ingress/latest_commits 100K 0B 100K /jails/dvl-ingress01/var/db/ingress/latest_commits

data02/freshports/dvl-ingress01/ingress/message-queues 160K 0B 160K /jails/dvl-ingress01/var/db/ingress/message-queues

data02/freshports/dvl-ingress01/ingress/repos 8.60G 0B 112K /jails/dvl-ingress01/var/db/ingress/repos

data02/freshports/dvl-ingress01/ingress/repos/doc 954M 0B 520M /jails/dvl-ingress01/var/db/ingress/repos/doc

data02/freshports/dvl-ingress01/ingress/repos/ports 3.43G 0B 2.22G /jails/dvl-ingress01/var/db/ingress/repos/ports

data02/freshports/dvl-ingress01/ingress/repos/src 4.24G 0B 2.56G /jails/dvl-ingress01/var/db/ingress/repos/src

data02/freshports/dvl-ingress01/jails 5.83G 0B 104K /jails/dvl-ingress01/jails

data02/freshports/dvl-ingress01/jails/freshports 5.83G 0B 404M /jails/dvl-ingress01/jails/freshports

data02/freshports/dvl-ingress01/jails/freshports/ports 5.43G 0B 2.64G /jails/dvl-ingress01/jails/freshports/usr/ports

data02/freshports/dvl-ingress01/modules 2.67M 0B 2.67M /jails/dvl-ingress01/usr/local/lib/perl5/site_perl/FreshPorts

data02/freshports/dvl-ingress01/scripts 2.34M 0B 2.34M /jails/dvl-ingress01/usr/local/libexec/freshports

data02/freshports/dvl-nginx01 22.2M 0B 96K none

data02/freshports/dvl-nginx01/www 22.1M 0B 96K none

data02/freshports/dvl-nginx01/www/freshports 20.2M 0B 20.2M /jails/dvl-nginx01/usr/local/www/freshports

data02/freshports/dvl-nginx01/www/freshsource 1.78M 0B 1.78M /jails/dvl-nginx01/usr/local/www/freshsource

data02/freshports/jailed 3.97G 0B 96K none

data02/freshports/jailed/dev-ingress01 96K 0B 96K none

data02/freshports/jailed/dev-nginx01 1.36G 0B 96K none

data02/freshports/jailed/dev-nginx01/cache 1.36G 0B 96K /var/db/freshports/cache

data02/freshports/jailed/dev-nginx01/cache/categories 1.28M 0B 1.20M /var/db/freshports/cache/categories

data02/freshports/jailed/dev-nginx01/cache/commits 96K 0B 96K /var/db/freshports/cache/commits

data02/freshports/jailed/dev-nginx01/cache/daily 12.0M 0B 11.9M /var/db/freshports/cache/daily

data02/freshports/jailed/dev-nginx01/cache/general 4.38M 0B 4.30M /var/db/freshports/cache/general

data02/freshports/jailed/dev-nginx01/cache/news 184K 0B 96K /var/db/freshports/cache/news

data02/freshports/jailed/dev-nginx01/cache/packages 5.19M 0B 5.10M /var/db/freshports/cache/packages

data02/freshports/jailed/dev-nginx01/cache/pages 96K 0B 96K /var/db/freshports/cache/pages

data02/freshports/jailed/dev-nginx01/cache/ports 1.34G 0B 1.34G /var/db/freshports/cache/ports

data02/freshports/jailed/dev-nginx01/cache/spooling 224K 0B 120K /var/db/freshports/cache/spooling

data02/freshports/jailed/dvl-ingress01 192K 0B 96K none

data02/freshports/jailed/dvl-ingress01/distfiles 96K 0B 96K none

data02/freshports/jailed/dvl-nginx01 1.56M 0B 96K none

data02/freshports/jailed/dvl-nginx01/cache 1.37M 0B 148K /var/db/freshports/cache

data02/freshports/jailed/dvl-nginx01/cache/categories 96K 0B 96K /var/db/freshports/cache/categories

data02/freshports/jailed/dvl-nginx01/cache/commits 96K 0B 96K /var/db/freshports/cache/commits

data02/freshports/jailed/dvl-nginx01/cache/daily 96K 0B 96K /var/db/freshports/cache/daily

data02/freshports/jailed/dvl-nginx01/cache/general 96K 0B 96K /var/db/freshports/cache/general

data02/freshports/jailed/dvl-nginx01/cache/news 176K 0B 96K /var/db/freshports/cache/news

data02/freshports/jailed/dvl-nginx01/cache/packages 96K 0B 96K /var/db/freshports/cache/packages

data02/freshports/jailed/dvl-nginx01/cache/pages 96K 0B 96K /var/db/freshports/cache/pages

data02/freshports/jailed/dvl-nginx01/cache/ports 208K 0B 128K /var/db/freshports/cache/ports

data02/freshports/jailed/dvl-nginx01/cache/spooling 200K 0B 120K /var/db/freshports/cache/spooling

data02/freshports/jailed/dvl-nginx01/freshports 96K 0B 96K none

data02/freshports/jailed/stage-ingress01 192K 0B 96K none

data02/freshports/jailed/stage-ingress01/data 96K 0B 96K none

data02/freshports/jailed/stage-nginx01 1.62G 0B 96K none

data02/freshports/jailed/stage-nginx01/cache 1.62G 0B 248K /var/db/freshports/cache

data02/freshports/jailed/stage-nginx01/cache/categories 2.53M 0B 2.44M /var/db/freshports/cache/categories

data02/freshports/jailed/stage-nginx01/cache/commits 96K 0B 96K /var/db/freshports/cache/commits

data02/freshports/jailed/stage-nginx01/cache/daily 8.57M 0B 8.48M /var/db/freshports/cache/daily

data02/freshports/jailed/stage-nginx01/cache/general 5.54M 0B 5.45M /var/db/freshports/cache/general

data02/freshports/jailed/stage-nginx01/cache/news 184K 0B 96K /var/db/freshports/cache/news

data02/freshports/jailed/stage-nginx01/cache/packages 13.4M 0B 13.3M /var/db/freshports/cache/packages

data02/freshports/jailed/stage-nginx01/cache/pages 96K 0B 96K /var/db/freshports/cache/pages

data02/freshports/jailed/stage-nginx01/cache/ports 1.59G 0B 1.59G /var/db/freshports/cache/ports

data02/freshports/jailed/stage-nginx01/cache/spooling 232K 0B 120K /var/db/freshports/cache/spooling

data02/freshports/jailed/test-ingress01 192K 0B 96K none

data02/freshports/jailed/test-ingress01/data 96K 0B 96K none

data02/freshports/jailed/test-nginx01 1008M 0B 96K none

data02/freshports/jailed/test-nginx01/cache 1008M 0B 236K /var/db/freshports/cache

data02/freshports/jailed/test-nginx01/cache/categories 2.32M 0B 2.24M /var/db/freshports/cache/categories

data02/freshports/jailed/test-nginx01/cache/commits 96K 0B 96K /var/db/freshports/cache/commits

data02/freshports/jailed/test-nginx01/cache/daily 12.4M 0B 12.3M /var/db/freshports/cache/daily

data02/freshports/jailed/test-nginx01/cache/general 3.47M 0B 3.36M /var/db/freshports/cache/general

data02/freshports/jailed/test-nginx01/cache/news 184K 0B 96K /var/db/freshports/cache/news

data02/freshports/jailed/test-nginx01/cache/packages 4.51M 0B 4.43M /var/db/freshports/cache/packages

data02/freshports/jailed/test-nginx01/cache/pages 96K 0B 96K /var/db/freshports/cache/pages

data02/freshports/jailed/test-nginx01/cache/ports 985M 0B 985M /var/db/freshports/cache/ports

data02/freshports/jailed/test-nginx01/cache/spooling 232K 0B 120K /var/db/freshports/cache/spooling

data02/freshports/stage-ingress01 19.1G 0B 96K none

data02/freshports/stage-ingress01/cache 2.14M 0B 96K /jails/stage-ingress01/var/db/freshports/cache

data02/freshports/stage-ingress01/cache/html 1.94M 0B 1.86M /jails/stage-ingress01/var/db/freshports/cache/html

data02/freshports/stage-ingress01/cache/spooling 104K 0B 104K /jails/stage-ingress01/var/db/freshports/cache/spooling

data02/freshports/stage-ingress01/freshports 10.7G 0B 96K none

data02/freshports/stage-ingress01/freshports/archive 10.7G 0B 10.7G /jails/stage-ingress01/var/db/freshports/message-queues/archive

data02/freshports/stage-ingress01/freshports/message-queues 9.96M 0B 7.89M /jails/stage-ingress01/var/db/freshports/message-queues

data02/freshports/stage-ingress01/ingress 5.33G 0B 96K /jails/stage-ingress01/var/db/ingress

data02/freshports/stage-ingress01/ingress/latest_commits 404K 0B 100K /jails/stage-ingress01/var/db/ingress/latest_commits

data02/freshports/stage-ingress01/ingress/message-queues 1012K 0B 180K /jails/stage-ingress01/var/db/ingress/message-queues

data02/freshports/stage-ingress01/ingress/repos 5.33G 0B 5.31G /jails/stage-ingress01/var/db/ingress/repos

data02/freshports/stage-ingress01/jails 405M 0B 104K /jails/stage-ingress01/jails

data02/freshports/stage-ingress01/jails/freshports 404M 0B 404M /jails/stage-ingress01/jails/freshports

data02/freshports/stage-ingress01/ports 2.63G 0B 2.63G /jails/stage-ingress01/jails/freshports/usr/ports

data02/freshports/test-ingress01 23.9G 0B 96K none

data02/freshports/test-ingress01/freshports 12.8G 0B 2.05G /jails/test-ingress01/var/db/freshports

data02/freshports/test-ingress01/freshports/cache 2.09M 0B 96K /jails/test-ingress01/var/db/freshports/cache

data02/freshports/test-ingress01/freshports/cache/html 1.89M 0B 1.89M /jails/test-ingress01/var/db/freshports/cache/html

data02/freshports/test-ingress01/freshports/cache/spooling 104K 0B 104K /jails/test-ingress01/var/db/freshports/cache/spooling

data02/freshports/test-ingress01/freshports/message-queues 10.8G 0B 9.07M /jails/test-ingress01/var/db/freshports/message-queues

data02/freshports/test-ingress01/freshports/message-queues/archive 10.8G 0B 10.8G /jails/test-ingress01/var/db/freshports/message-queues/archive

data02/freshports/test-ingress01/ingress 8.10G 0B 128K /jails/test-ingress01/var/db/ingress

data02/freshports/test-ingress01/ingress/latest_commits 344K 0B 100K /jails/test-ingress01/var/db/ingress/latest_commits

data02/freshports/test-ingress01/ingress/message-queues 932K 0B 164K /jails/test-ingress01/var/db/ingress/message-queues

data02/freshports/test-ingress01/ingress/repos 8.10G 0B 5.33G /jails/test-ingress01/var/db/ingress/repos

data02/freshports/test-ingress01/jails 3.00G 0B 96K /jails/test-ingress01/jails

data02/freshports/test-ingress01/jails/freshports 3.00G 0B 405M /jails/test-ingress01/jails/freshports

data02/freshports/test-ingress01/jails/freshports/ports 2.60G 0B 2.60G /jails/test-ingress01/jails/freshports/usr/ports

data02/jails 363G 0B 9.54G /jails

data02/jails/bacula 17.8G 0B 16.3G /jails/bacula

data02/jails/bacula-sd-02 4.49G 0B 2.91G /jails/bacula-sd-02

data02/jails/bacula-sd-03 5.62G 0B 4.11G /jails/bacula-sd-03

data02/jails/besser 9.29G 0B 5.72G /jails/besser

data02/jails/certs 3.93G 0B 2.41G /jails/certs

data02/jails/certs-rsync 3.91G 0B 2.42G /jails/certs-rsync

data02/jails/cliff2 18.3G 0B 18.3G /jails/cliff2

data02/jails/dev-ingress01 6.07G 0B 3.97G /jails/dev-ingress01

data02/jails/dev-nginx01 5.02G 0B 3.36G /jails/dev-nginx01

data02/jails/dns-hidden-master 4.36G 0B 2.64G /jails/dns-hidden-master

data02/jails/dns1 12.0G 0B 4.90G /jails/dns1

data02/jails/dvl-ingress01 10.1G 0B 6.29G /jails/dvl-ingress01

data02/jails/dvl-nginx01 2.89G 0B 1.28G /jails/dvl-nginx01

data02/jails/git 5.94G 0B 4.26G /jails/git

data02/jails/jail_within_jail 1.44G 0B 585M /jails/jail_within_jail

data02/jails/mqtt01 4.68G 0B 3.03G /jails/mqtt01

data02/jails/mydev 26.7G 0B 19.8G /jails/mydev

data02/jails/mysql01 14.7G 0B 5.40G /jails/mysql01

data02/jails/mysql02 5.75G 0B 7.79G /jails/mysql02

data02/jails/nsnotify 4.98G 0B 2.60G /jails/nsnotify

data02/jails/pg01 50.9G 0B 10.8G /jails/pg01

data02/jails/pg02 13.7G 0B 11.0G /jails/pg02

data02/jails/pg03 14.5G 0B 10.8G /jails/pg03

data02/jails/pkg01 17.9G 0B 13.7G /jails/pkg01

data02/jails/samdrucker 5.74G 0B 4.16G /jails/samdrucker

data02/jails/serpico 4.06G 0B 2.50G /jails/serpico

data02/jails/stage-ingress01 7.25G 0B 3.63G /jails/stage-ingress01

data02/jails/stage-nginx01 3.02G 0B 1.44G /jails/stage-nginx01

data02/jails/svn 11.6G 0B 9.77G /jails/svn

data02/jails/talos 3.82G 0B 2.38G /jails/talos

data02/jails/test-ingress01 3.89G 0B 1.89G /jails/test-ingress01

data02/jails/test-nginx01 2.99G 0B 1.38G /jails/test-nginx01

data02/jails/unifi01 32.4G 0B 12.5G /jails/unifi01

data02/jails/webserver 13.3G 0B 11.3G /jails/webserver

data02/reserved 180G 180G 96K none

data02/vm 62.0G 0B 6.41G /usr/local/vm

data02/vm/freebsd-test 701M 0B 112K /usr/local/vm/freebsd-test

data02/vm/freebsd-test/disk0 700M 0B 700M -

data02/vm/hass 52.0G 0B 13.4G /usr/local/vm/hass

data02/vm/home-assistant 351M 0B 351M /usr/local/vm/home-assistant

data02/vm/myguest 2.55G 0B 2.55G /usr/local/vm/myguest

After putting that into a gist and pastiing it to the channle, I noticed the highlighted line 180 above.

Then I remembered. Some time ago I’d create this dataset to stop the zpool from filling up. I had read about this approach, and tried it.

I freed up space by deleting that /tmp/delete=me file (I didn’t intend that to be an =).

The next day, I ran this command to clear the reservation and free up that “free space”.

[13:23 r730-01 dvl ~] % sudo zfs set refreservation=0 data02/reserved

[13:24 r730-01 dvl ~] %

Monitoring

What has failed me is my monitoring. The zpool was getting full, but not in this sense:

[14:20 r730-01 dvl ~] % zpool list data02

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data02 928G 705G 223G - - 67% 75% 1.00x ONLINE -

I’m sure the default monitoring on this zpool is checking the CAP (capacity) – I may look at adjusting the monitoring.

Cleaning up old snapshots

I need to move most of data02 into data04.

[14:24 r730-01 dvl ~] % zpool list

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data01 5.81T 6.36G 5.81T - - 2% 0% 1.00x ONLINE -

data02 928G 618G 310G - - 56% 66% 1.00x ONLINE -

data03 7.25T 1.27T 5.98T - - 48% 17% 1.00x ONLINE -

data04 29.1T 6.11T 23.0T - - 0% 21% 1.00x ONLINE -

zroot 107G 48.2G 58.8G - - 53% 45% 1.00x ONLINE -

In the short term, I’ll buy some time by deleting old snapshots. Look at all this old stuff:

[14:31 r730-01 dvl ~] % zfs list -o name -r -t snapshot data02 | grep @mkjail

data02/jails/bacula@mkjail-202509051453

data02/jails/bacula@mkjail-202602101338

data02/jails/bacula-sd-02@mkjail-202509051453

data02/jails/bacula-sd-02@mkjail-202602101338

data02/jails/bacula-sd-03@mkjail-202509051453

data02/jails/bacula-sd-03@mkjail-202602101338

data02/jails/besser@mkjail-202602101619

data02/jails/certs@mkjail-202509051453

data02/jails/certs@mkjail-202602101338

data02/jails/certs-rsync@mkjail-202509051453

data02/jails/certs-rsync@mkjail-202602101338

data02/jails/dev-ingress01@mkjail-202509051453

data02/jails/dev-ingress01@mkjail-202602101338

data02/jails/dev-nginx01@mkjail-202509051453

data02/jails/dev-nginx01@mkjail-202602101338

data02/jails/dns-hidden-master@mkjail-202509051453

data02/jails/dns-hidden-master@mkjail-202602101513

data02/jails/dns1@mkjail-202509051453

data02/jails/dns1@mkjail-202602101242

data02/jails/dvl-ingress01@mkjail-202509051453

data02/jails/dvl-ingress01@mkjail-202602101338

data02/jails/dvl-nginx01@mkjail-202509051453

data02/jails/dvl-nginx01@mkjail-202602101338

data02/jails/git@mkjail-202509051453

data02/jails/git@mkjail-202602101338

data02/jails/jail_within_jail@mkjail-202509051453

data02/jails/jail_within_jail@mkjail-202602101609

data02/jails/mqtt01@mkjail-202509051453

data02/jails/mqtt01@mkjail-202602101338

data02/jails/mydev@mkjail-202509051453

data02/jails/mydev@mkjail-202602101529

data02/jails/mysql01@mkjail-202509051453

data02/jails/mysql01@mkjail-202602101242

data02/jails/mysql02@mkjail-202602102333

data02/jails/nsnotify@mkjail-202509051453

data02/jails/nsnotify@mkjail-202602101551

data02/jails/nsnotify@mkjail-202602101558

data02/jails/pg01@mkjail-202509051453

data02/jails/pg01@mkjail-202602101338

data02/jails/pg02@mkjail-202509051453

data02/jails/pg02@mkjail-202602101338

data02/jails/pg03@mkjail-202509051453

data02/jails/pg03@mkjail-202602101338

data02/jails/pkg01@mkjail-202509051453

data02/jails/pkg01@mkjail-202602082233

data02/jails/samdrucker@mkjail-202509051453

data02/jails/samdrucker@mkjail-202602101338

data02/jails/serpico@mkjail-202509051453

data02/jails/serpico@mkjail-202602101536

data02/jails/stage-ingress01@mkjail-202509051453

data02/jails/stage-ingress01@mkjail-202602101338

data02/jails/stage-nginx01@mkjail-202509051453

data02/jails/stage-nginx01@mkjail-202602101338

data02/jails/svn@mkjail-202509051453

data02/jails/svn@mkjail-202602101338

data02/jails/talos@mkjail-202509051453

data02/jails/talos@mkjail-202602101338

data02/jails/test-ingress01@mkjail-202509051453

data02/jails/test-ingress01@mkjail-202602101338

data02/jails/test-nginx01@mkjail-202509051453

data02/jails/test-nginx01@mkjail-202602101338

data02/jails/unifi01@mkjail-202509051453

data02/jails/unifi01@mkjail-202602101605

data02/jails/webserver@mkjail-202509051453

data02/jails/webserver@mkjail-202602101338

Quickly scripted:

[14:32 r730-01 dvl ~] % zfs list -o name -r -t snapshot data02 | grep @mkjail | xargs -n 1 sudo zfs destroy

[14:32 r730-01 dvl ~] % zpool list data02

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data02 928G 618G 310G - - 56% 66% 1.00x ONLINE -

But there’s more, that was just mkjail related.

[14:42 r730-01 dvl ~] % zfs list -o name -r -t snapshot data02 | grep -v @autosnap | grep -v @empty

NAME

data02/freshports/dvl-ingress01/ingress/repos/doc@for-dvl-ingress01

data02/freshports/dvl-ingress01/ingress/repos/ports@for-dvl-ingress01

data02/freshports/dvl-ingress01/ingress/repos/src@for-dvl-ingress01

data02/jails/besser@before.26.1.0

data02/jails/besser@before.26.1.1

data02/jails/besser@before.26.1.1_1

data02/jails/besser@before.26.1.1_2

data02/jails/besser@before.26.2.0

data02/jails/dns1@before.bind918

data02/jails/dns1@bind.9.18

data02/jails/mysql01@MySQL-8.0

data02/jails/mysql01@mysql80-part2

data02/jails/mysql01@mysql80-part3

data02/jails/pkg01@before15.0

data02/jails/unifi01@before.mongodb60-6.0.24

@autosnap is sanoid-related. @empty is on some special FreshPorts filesystems. The reset look good to go.

Let’s try a dry run, and notice we don’t need sudo for that.

[14:49 r730-01 dvl ~] % zfs list -o name -r -t snapshot data02 | grep -v @autosnap | grep -v @empty | xargs -n 1 zfs destroy -n

cannot open 'NAME': dataset does not exist

cannot destroy 'data02/jails/mysql01@mysql80-part3': snapshot has dependent clones

use '-R' to destroy the following datasets:

data02/jails/mysql02@autosnap_2026-02-13_00:00:01_daily

data02/jails/mysql02@autosnap_2026-02-14_00:00:11_daily

data02/jails/mysql02@autosnap_2026-02-15_00:00:01_daily

data02/jails/mysql02@autosnap_2026-02-16_00:00:02_daily

data02/jails/mysql02@autosnap_2026-02-17_00:00:00_daily

data02/jails/mysql02@autosnap_2026-02-18_00:00:06_daily

data02/jails/mysql02@autosnap_2026-02-19_00:00:03_daily

data02/jails/mysql02@autosnap_2026-02-19_08:00:04_hourly

data02/jails/mysql02@autosnap_2026-02-19_09:00:01_hourly

data02/jails/mysql02@autosnap_2026-02-19_10:00:02_hourly

data02/jails/mysql02@autosnap_2026-02-19_10:00:02_frequently

data02/jails/mysql02@autosnap_2026-02-19_10:15:09_frequently

data02/jails/mysql02@autosnap_2026-02-19_10:30:02_frequently

data02/jails/mysql02@autosnap_2026-02-19_10:45:09_frequently

data02/jails/mysql02@autosnap_2026-02-19_11:00:01_hourly

data02/jails/mysql02@autosnap_2026-02-19_11:00:01_frequently

data02/jails/mysql02@autosnap_2026-02-19_11:15:08_frequently

data02/jails/mysql02@autosnap_2026-02-19_11:30:00_frequently

data02/jails/mysql02@autosnap_2026-02-19_11:45:08_frequently

data02/jails/mysql02@autosnap_2026-02-19_12:00:01_hourly

data02/jails/mysql02@autosnap_2026-02-19_12:00:01_frequently

data02/jails/mysql02@autosnap_2026-02-19_12:15:08_frequently

data02/jails/mysql02@autosnap_2026-02-19_12:30:01_frequently

data02/jails/mysql02@autosnap_2026-02-19_12:45:09_frequently

data02/jails/mysql02@autosnap_2026-02-19_13:00:02_hourly

data02/jails/mysql02@autosnap_2026-02-19_13:00:02_frequently

data02/jails/mysql02@autosnap_2026-02-19_13:15:09_frequently

data02/jails/mysql02@autosnap_2026-02-19_13:30:01_frequently

data02/jails/mysql02@autosnap_2026-02-19_13:45:08_frequently

data02/jails/mysql02@autosnap_2026-02-19_14:00:03_hourly

data02/jails/mysql02@autosnap_2026-02-19_14:00:03_frequently

data02/jails/mysql02@autosnap_2026-02-19_14:15:09_frequently

data02/jails/mysql02@autosnap_2026-02-19_14:30:00_frequently

data02/jails/mysql02@autosnap_2026-02-19_14:45:10_frequently

data02/jails/mysql02

Two things:

- Add -H to remove that NAME header

- don’t touch data02/jails/mysql01@mysql80-part3 yet

[14:50 r730-01 dvl ~] % zfs list -Ho name -r -t snapshot data02 | grep -v @autosnap | grep -v @empty | grep -v mysql80-part3 | xargs -n 1 zfs destroy -n

[14:51 r730-01 dvl ~] %

That looks good. This time, for real (with sudo and without -n)

[14:51 r730-01 dvl ~] % zfs list -Ho name -r -t snapshot data02 | grep -v @autosnap | grep -v @empty | grep -v mysql80-part3 | xargs -n 1 sudo zfs destroy

[14:54 r730-01 dvl ~] % zpool list data02

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data02 928G 591G 337G - - 53% 63% 1.00x ONLINE -

So what?

Yeah, this was my fault. As most things computing-related are. It’s never the system’s fault.

I am grateful that although some things stopped working, nothing seems corrupted. I don’t mean from a bitrot/checksum point of view (with respect to ZFS). I mean the MySQL database is not corrupted, the PostgreSQL databases are fine (as expected), none of the FreshPorts nodes were affected at all (they kept processing incoming commits).

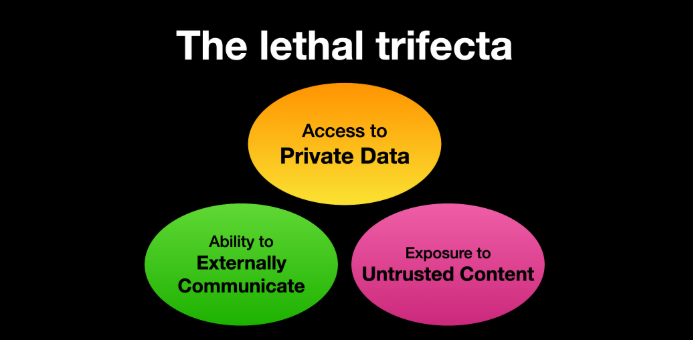

The Postfix issue seems to have been the canary. I suspect it won’t accept an incoming message unless it can allocate a certain amount of space. It could not. It coughed. If I had not had a reservation on data02/reserved, usage would have grown to 80% when monitoring would have triggered.

I think my reservation has to be less than 20% of the pool size, let’s save 15% .15 * 928 = 139.2

Let’s this this idea.

Get the pool to 80%

Let’s trigger the monitoring alert.

[17:58 r730-01 dvl ~] % zpool list data02

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data02 928G 591G 337G - - 53% 63% 1.00x ONLINE -

How full is that? .8 * 928 = 742.4 which is 151.4 more than the 591 now in use (that’s all in GB).

Let’s write to a file and take up some space.

[18:17 cliff2 dvl ~] % sudo dd if=/dev/random of=/tmp/delete-me bs=1M count=151400 status=progress

158554128384 bytes (159 GB, 148 GiB) transferred 622.025s, 255 MB/s

151400+0 records in

151400+0 records out

158754406400 bytes transferred in 622.731688 secs (254932276 bytes/sec)

Close, oh, so close!

[18:25 r730-01 dvl ~] % zpool list data02

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data02 928G 739G 189G - - 67% 79% 1.00x ONLINE -

Let’s do another 5G to another file:

[18:27 cliff2 dvl ~] % sudo dd if=/dev/random of=/tmp/delete-me-2 bs=1M count=5000 status=progress

5109710848 bytes (5110 MB, 4873 MiB) transferred 17.001s, 301 MB/s

5000+0 records in

5000+0 records out

5242880000 bytes transferred in 17.443215 secs (300568443 bytes/sec)

[18:31 r730-01 dvl ~] % zpool list data02

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data02 928G 743G 185G - - 68% 80% 1.00x ONLINE -

There we go! Target acquired.

Good, and the monitoring is triggered (after I manually scheduled a check): ZFS POOL ALARM: POOL data02 usage is WARNING (80%)

So let’s go with a reservation of 130G, and take note of the AVAIL value before and after.

[18:35 r730-01 dvl ~] % zfs list data02

NAME USED AVAIL REFER MOUNTPOINT

data02 744G 155G 96K none

[18:35 r730-01 dvl ~] % sudo zfs set refreservation=130G data02/reserved

[18:35 r730-01 dvl ~] % zfs list data02

NAME USED AVAIL REFER MOUNTPOINT

data02 874G 25.1G 96K none

[18:36 r730-01 dvl ~] %

Let’s delete my test files:

[18:34 r730-01 dvl ~] % zfs list data02

NAME USED AVAIL REFER MOUNTPOINT

data02 744G 155G 96K none

It says 155G available.

[18:37 cliff2 dvl ~] % sudo rm -rf /tmp/delete-me*

[18:37 cliff2 dvl ~] %

And now we have:

[18:37 r730-01 dvl ~] % zfs list data02

NAME USED AVAIL REFER MOUNTPOINT

data02 869G 30.0G 96K none

Pretty much the same. Guess why?

Snapshots.

Or in this case, one snapshot:

[18:39 r730-01 dvl ~] % zfs list -r -t snapshot data02/jails/cliff2 | tail -4

data02/jails/cliff2@autosnap_2026-02-19_18:00:06_hourly 0B - 2.46G -

data02/jails/cliff2@autosnap_2026-02-19_18:00:06_frequently 0B - 2.46G -

data02/jails/cliff2@autosnap_2026-02-19_18:15:30_frequently 376K - 2.46G -

data02/jails/cliff2@autosnap_2026-02-19_18:30:02_frequently 148G - 150G -

Let’s sacrifice that one:

[18:39 r730-01 dvl ~] % sudo zfs destroy data02/jails/cliff2@autosnap_2026-02-19_18:30:02_frequently

[18:40 r730-01 dvl ~] %

[18:40 r730-01 dvl ~] % zfs list data02

NAME USED AVAIL REFER MOUNTPOINT

data02 721G 178G 96K none

[18:40 r730-01 dvl ~] % zpool list data02

NAME SIZE ALLOC FREE CKPOINT EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT

data02 928G 591G 337G - - 52% 63% 1.00x ONLINE -

There, that’s better.

Overview

My monitoring didn’t account for the reservation. My zpool would (and did) fill up before triggering any monitoring.

I adjusted my reservation size based on existing monitoring.

If I recall correctly, the post I read about reservation was based on: if the zpool fills up, this saves you some space, so you notice the problem, you solve the problem, thereby allowing the system to continue running. The theory being that the reservation is just stopping some rogue process/jail from completely filling up the zpool – it’s easier to manipulate with a mostly full zpool than a 100% zpool.

Yes, you could set a quota to restrict certain jails from exploding, but that seems like more like a user-based tool (don’t let user foo use more than 100GB in their home dir).

Reading elsewhere, I think the primary use of reservations is to reserve future space not yet used. Or “A ZFS reservation is an allocation of disk space from the pool that is guaranteed to be available to a dataset”. Or in other words, always ensure that user foo has 150GB available from the pool).

In short: I’m not convinced reservation or quota is useful to me in this situation. However, I’ll keep the reservation on data02/reserved for now.

Thanks.